Compression and Transmission

In the past years our research group has been investigating algorithms that drastically depart from “Motion Compensated DPCM/Transform (MC-DPCM)”. Our prime intention is to allow ourselves a completely “fresh view” on how non-linear dependencies between pixels and motion in images and video can be described and harvested for compression. To this end we employ non-linear machine learning algorithms that explore dependencies between vast amounts of pixels in images and video sequences. Our current approaches are based on non-linear Kernel methods, Steered Mixture of Experts networks (MoE) and Restricted Boltzmann Machines and show strong resemblance to recent work on deep neural networks. Our experiments give hope that our networks may provide far better visual quality compared to DPCM/Transform approaches in the long run.

Research Activities

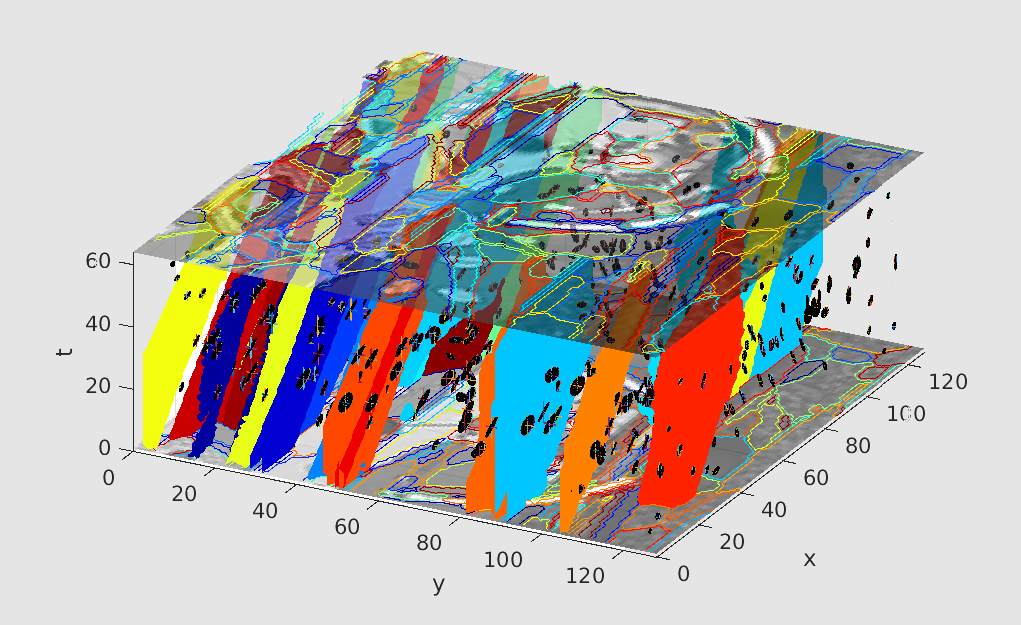

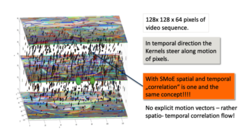

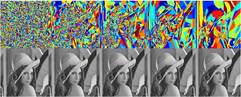

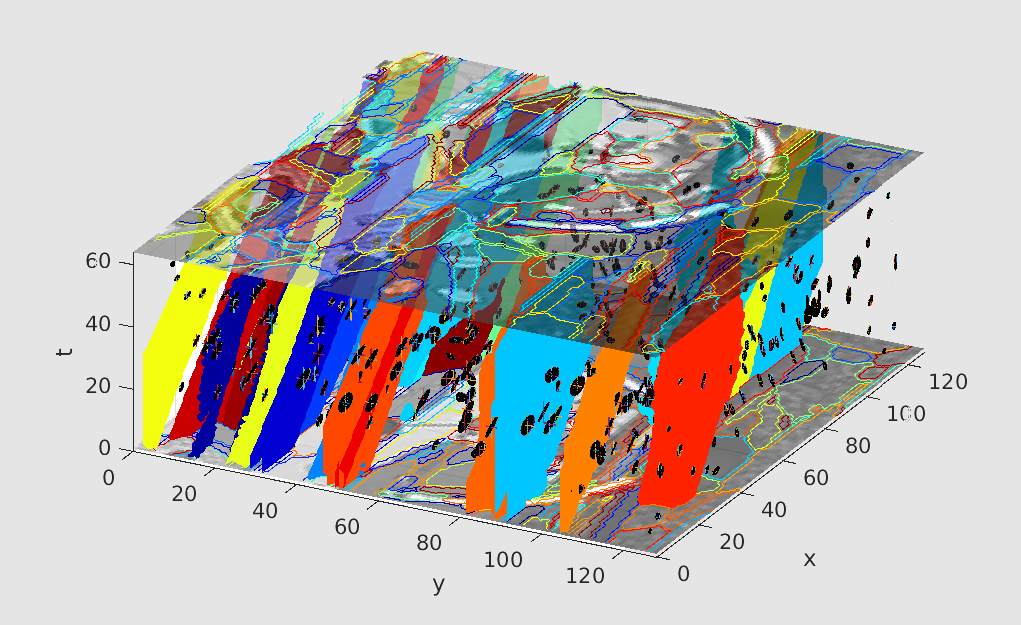

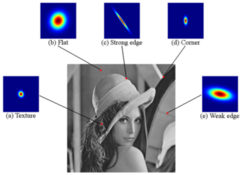

Compression of Images and Video with SMoE Gating Networks

Our challenge is to efficiently identify and harvest longest-range correlations in images and video - to allow for leaps in compression. Our strategies completely depart from current JPEG and MPEG/ITU type compression approaches with block processing, block transforms and motion vectors. In our recent work we develop specifically designed SMoE Gating Networks for compression. These networks are based on Steered Mixture-of-Experts (SMoE) networks that distribute swarms of N steered “atoms” into arrays of image pixels (for images) or into 3D stacks of video pixels (for video). Simple “atoms” may comprise of steered 2D Gaussian Kernels (for images) or of steered 3D Gaussian Kernels (for video). Kernel parameters include location of individual Kernels as well as steering and bandwidth parameters.

Sparse Steered Mixtureof-Experts (SMoE) regression networks for Image and Video compression

© Communications Systems Group (TU-Berlin)

© Communications Systems Group (TU-Berlin)

Kernel regression has been proven successful for image denoising, deblocking and reconstruction. These techniques lay the foundation for new image coding opportunities. We introduce a novel compression scheme: The sparse Steered Mixtureof-Experts (SMoE) regression network for coding image and video data.

Motion Modeling for Motion Vector Coding in HEVC

© CSG

© CSG

Motion Modeling for Motion Vector Coding in HEVC" Tok, M.; Sikora, T.submitted to the Picture Coding Symposium (PCS) 2015 Abstract During the standardization of HEVC, new motion information coding and prediction schemes such as temporal motion vector prediction have been investigated to reduce the spatial redundancy of motion vector fields used for motion compensated inter prediction. In this paper a general motion model based vector coding scheme is introduced. This scheme includes estimation, coding and dynamic recombination of parametric motion models to generate vector predictorsand merge candidates for all common HEVC inter coding settings. Bit rate reductions of up to 4.9% indicate that higher order motion models can increase the efficiency of motion information coding in modern hybrid video coding standards.

Theoretical Considerations Concerning Pixelwise Temporal Filtering

The following zip-files contain exemplary groundtruth motion vector data for the test sequences. Due to website restrictions the filesize is limited to 20MB. If you want to obtain the complete motion vector fields please contact esche@nue.tu-berlin.de.

Video Coding Group

The working group "Video Coding" deals with approaches of global and local motion estimation and compensation with the aim to improve existing video codecs like e.g. H.264 / AVC. Currently, the working group is active in particular within the framework of the ITU / ISO / IEC standardization effort "HEVC". This has already resulted in numerous publications and input documents for MPEG. The sub-projects listed below have been processed so far.

MPEG-4 Audio Lossless Coding (ALS)

© Sikora

© Sikora

Der MPEG-4 ALS Standard gehört zur Familie der MPEG-4 Audiocodierstandards, die von der ISO (www.iso.org) herausgegeben werden. Im Gegensatz zu verlustbehafteten Verfahren wie MP3 und AAC, die lediglich die subjektiv empfundene Qualität zu erhalten versuchen, erlaubt die verlustlose Codierung jedoch eine exakte Wiederherstellung jedes einzelnen Bits der ursprüglichen Audiodaten. Das grundlegende Verfahren von MPEG-4 ALS wurde am Fachgebiet Nachrichtenübertragung der Technischen Universität Berlin entwickelt. Die erste Version des MPEG-4 ALS Standards wurde 2006 veröffentlicht, und die aktuelle Beschreibung ist inzwischen Teil der 4. Edition (2009) des übergreifenden MPEG-4 Audiostandards (ISO/IEC 14496-3:2009). Eine neue Version (RM23) der MPEG-4 ALS Referenzsoftware und des Codecs ist jetzt verfügbar. Mehr dazu hier (in English)... MPEG-4 ALS wird mittlerweile von FFmpeg, MPlayer, VLC Media Player und weiteren Anwendungen unterstützt. Mehr dazu hier (in Englisch)...

Software

Regularized Gradient Descent Training of Steered Mixture of Experts for Sparse Image Representation (tf-smoe)

© Communications Systems Group (TU-Berlin)

© Communications Systems Group (TU-Berlin)

A Tensorflow-based implementation of the Steered Mixture-of-Experts (SMoE) image modelling approach described in the ICIP 2018 paper Regularized Gradient Descent Training of Steered Mixture of Experts for Sparse Image Representation. This repository contains the implementation of a reference class for training and regressing a SMoE model, an easy to use training script as well as a jupyter notebook which helps you getting started writing you own code with a more elaborate example.

Awards

Best Student Paper Award @ IEEE ICME 2017

© FG NUE

© FG NUE

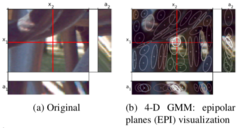

We are delighted to announce that our paper "Steered mixture-of-experts for light field coding, depth estimation, and processing" won the Best Student Paper Award at the IEEE International Conference on Multimedia and Expo, 10.07.2017 - 14.07.2017. Congratulations to Ruben Verhack and the co-authors.

Prof. Thomas Sikora of Technical University Berlin receives prestigious 2016 Google Faculty Research Award in Machine Perception

Congrats to Prof. Sikora and his Communication Systems Lab members at TU Berlin, Lieven Lange, Rolf Jongebloed, and the colleagues from Uni Ghent/iMinds Lab, Ruben Verhack (joint PhD between TUB & iMind Lab Uni Ghent), Prof. Peter Lambert and Dr. Glenn van Walllendael. The award was given for his work on Video Compression with Steered-Mixture-of-Experts Networks.

Highly Recommended Paper Award @ PCS 2015

© FG NUe

© FG NUe

We are delighted to announce that our paper "Lossless Image Compression based on Kernel Least Mean Squares" won the Highly Recommended Paper Award at the IEEE Picture Coding Symposium, 31.05.2015 - 03.06.2015. Congratulations to Ruben Verhack and the co-authors.

Top 10% Paper Award @ ICIP 2014

© Sikora

© Sikora

We are delighted to announce that our paper "LOSSY IMAGE CODING IN THE PIXEL DOMAIN USING A SPARSE STEERING KERNEL SYNTHESIS APPROACH" won the Top 10% Paper Award at the IEEE International Conference on Image Processing, 27.10.2014 - 30.10.2014. Congratulations to Ruben Verhack and the co-authors.

Related Publications

2019

- Simone Croci, Sebastian Knorr, Aljosa Smolic

Study on the Perception of Sharpness Mismatch in Stereoscopic Video

IEEE 11th International Conference on Quality of Multimedia Experience (QoMEX), Berlin, Germany, 05.06.2019 - 07.06.2019

Details BibTeX - Rolf Jongebloed, Erik Bochinski, Lieven lange, Thomas Sikora

Quantized and Regularized Optimization for Coding Images Using Steered Mixtures-of-Experts

2019 Data Compression Conference (DCC), 2019

Details BibTeX

2018

- Erik Bochinski, Rolf Jongebloed, Michael Tok, Thomas Sikora

Regularized Gradient Descent Training of Steered Mixture of Experts for Sparse Image Representation

IEEE International Conference on Image Processing (ICIP), Athens, Greece, 07.10.2018 - 10.10.2018

DOI: 10.1109/ICIP.2018.8451823 Electronic ISBN: 978-1-4799-7061-2 Print on Demand(PoD) ISBN: 978-1-4799-7062-9 Electronic ISSN: 2381-8549

Details BibTeX - Rolf Jongebloed, Ruben Verhack, Lieven Lange, Thomas Sikora

Hierarchical Learning of Sparse Image Representations using Steered Mixture-of-Experts

2018 IEEE International Conference on Multimedia Expo Workshops (ICMEW), San Diego, CA, USA, 23.07.2018 - 27.07.2018

Details BibTeX - Markus Küchhold, Maik Simon, Thomas Sikora

Restricted Boltzmann Machine Image Compression

Picture Coding Symposium (PCS 2018), San Francisco, CA, USA, 24.06.2018 - 27.06.2018

DOI: 10.1109/PCS.2018.8456279

Details BibTeX - Michael Tok, Rolf Jongebloed, Lieven Lange, Erik Bochinski, Thomas Sikora

An Mse Approach for Training and Coding Steered Mixtures of Experts

Picture Coding Symposium (PCS), San Francisco, California USA, 24.06.2018 - 27.06.2018

Details BibTeX

2017

- Tim Kießling

Analysis of Initialization and Pre-Training Strategies of a Neural Network for Image Compression

02.08.2017, master thesis tutored by Frau B. Unger, M.Sc./ E. Bochinski, M.Sc., Prof. Dr.-Ing. Thomas Sikora

Details BibTeX - Ruben Verhack, Thomas Sikora, Lieven Lange, Rolf Jongebloed, Glenn Van Wallendael, Peter Lambert

[Best Student Paper] STEERED MIXTURE-OF-EXPERTS FOR LIGHT FIELD CODING, DEPTH ESTIMATION, AND PROCESSING

IEEE International Conference on Multimedia and Expo, 10.07.2017 - 14.07.2017, pp. 1183-1188

ISBN:978-1-5090-6067-2/17

Details BibTeX - Ruben Verhack, Simon Van de Keer, Glenn Van Wallendael, Peter Lambert, Thomas Sikora

Color prediction in image coding using Steered Mixture-of-Experts

IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), 2017, New Orleans, LA, USA, 05.03.2017 - 09.03.2017

Electronic ISBN: 978-1-5090-4117-6 USB ISBN: 978-1-5090-4116-9 Print on Demand(PoD) ISBN: 978-1-5090-4118-3 Electronic ISSN: 2379-190X

Details BibTeX

2016

- Lieven Lange, Ruben Verhack, Thomas Sikora

Video Representation and Coding Using a Sparse Steered Mixture-of-Experts Network

Picture Coding Symposium, Nuremberg, Germany, 04.12.2016 - 07.12.2016, pp. 1-5

In IEEE-Explore zugefügt am 24 April 2017! Electronic ISSN: 2472-7822 DOI: 10.1109/PCS.2016.7906369

Details BibTeX - Ruben Verhack, Thomas Sikora, Lieven Lange, Glenn Van Wallendael, Peter Lambert

A Universal Image Coding Approahc using Sparse Steered Mixture-of-Experts Regression

IEEE International Conference on Image Processing, Phoenix, AZ, USA, 25.09.2016 - 28.09.2016, pp. 2142-2146

IEEE Catalog Number: CFP16CIP-USB ISBN: 978-1-4673-9960-9

Details BibTeX

2015

- Stephan A. Rein; Frank H.P. Fitzek; Clemens Gühmann; Thomas Sikora

Evaluation of the wavelet image two-line coder: A low complexity scheme for image compression

Signal Processing: Image Communication, EURASIP, vol. Volume 37, 2015, September 2015, pp. 58-74

Details BibTeX - Ruben Verhack, Lieven Lange, Peter Lambert, Rik Van de Walle, Thomas Sikora

Lossless Image Compression based on Kernel Least Mean Squares

31st IEEE Picture Coding Symposium, Cairns, Australia, 31.05.2015 - 03.06.2015

Details BibTeX - Michael Tok, Volker Eiselein and Thomas Sikora

Motion Modeling for Motion Vector Coding in HEVC

31st IEEE Picture Coding Symposium, Cairns, Australia, 31.05.2015 - 03.06.2015

Details BibTeX - Thomas Sikora

A Novel Kernel PCA/KLT Approach for Transform Coding of Waveforms

31st IEEE Picture Coding Symposium, Cairns, Australia, 31.05.2015 - 03.06.2015, pp. 174-178

Details BibTeX

2014

- Ruben Verhack, Andreas Krutz , Peter Lambert , Rik Van de Walle, Thomas Sikora

[Top 10% Paper] LOSSY IMAGE CODING IN THE PIXEL DOMAIN USING A SPARSE STEERING KERNEL SYNTHESIS APPROACH

21th IEEE International Conference on Image Processing, Paris,France, 27.10.2014 - 30.10.2014, pp. 4807-4811

ISBN: 978-1-4799-5750-7

Details BibTeX - Marko Esche

Temporal Pixel Trajectories for Frame Denoising in a Hybrid Video Codec

2014

Details BibTeX - Marko Esche, Michael Tok, Thomas Sikora

Theoretical Considerations Concerning Pixelwise Temporal Filtering

Data Compression Conference, Snowbird, Utah, 26.03.2014 - 28.03.2014, pp. 73-82

Details BibTeX

2013

- Michael Tok, Marko Esche and Thomas Sikora

A Dynamic Model Buffer for Parametric Motion Vector Prediction in Random-Access Coding Scenarios

20th IEEE International Conference on Image Processing, Melbourne, Australia, 15.09.2013 - 18.09.2013

Details BibTeX - Marko Esche, Michael Tok and Thomas Sikora

Adaptive Dense Vector Field Interpolation for Temporal Filtering

20th IEEE International Conference on Image Processing, Melbourne, Australia, 15.09.2013 - 18.09.2013

Details BibTeX - Rubén Heras Evangelio, Ivo Keller, Thomas Sikora

Multiple Cue Indexing and Summarization of Surveillance Video

10th IEEE International Conference on Advanced Video and Signal-Based Surveillance, Kraków, Poland, 27.08.2013 - 30.08.2013

Details BibTeX - Michael Tok, Alexander Glantz, Andreas Krutz and Thomas Sikora

Monte-Carlo-based Parametric Motion Estimation using a Hybrid Model Approach

IEEE Transactions on Circuits and Systems for Video Technology (TCSVT), IEEE, April 2013

Details BibTeX - Michael Tok and Marko Esche and Alexander Glantz and Andreas Krutz and Thomas Sikora

A Parametric Merge Candidate for High Efficiency Video Coding

Data Compression Conference, Snowbird, Utah, 20.03.2013 - 22.03.2013

Details BibTeX - Marko Esche and Michael Tok and Alexander Glantz and Andreas Krutz and Thomas Sikora

Efficient Quadtree Compression for Temporal Trajectory Filtering

Data Compression Conference, Snowbird, Utah, 20.03.2013 - 22.03.2013

Details BibTeX

2012

- Andreas Krutz, Alexander Glantz, Michael Tok, Marko Esche and Thomas Sikora

Adaptive Global Motion Temporal Filtering for High Efficiency Video Coding

IEEE Transactions on Circuits and Systems for Video Technology (TCSVT), IEEE, 01.12.2012

Details BibTeX - Pascal Kelm, Vanessa Murdock, Sebastian Schmiedeke, Steven Schockaert, Pavel Serdyukov, Olivier Van Laere

Georeferencing in Social Networks

in Social Media Retrieval, Naeem Ramzan, Roelof van Zwol, Jong-Seok Lee, Kai Clüver, Xian-Sheng Hua (ed(s).), Springer, 30.11.2012

ISBN 978-1-4471-4554-7

Details BibTeX - Marko Esche and Alexander Glantz and Andreas Krutz and Michael Tok and Thomas Sikora

Quadtree-based Temporal Trajectory Filtering

Proceedings of the 19th IEEE International Conference on Image Processing (ICIP), Orlando, Florida, 30.09.2012 - 03.10.2012

Details BibTeX - Marko Esche, Mustafa Karaman, Thomas Sikora

Semi-Automatic Object Tracking in Video Sequences by Extension of the MRSST Algorithm

in Analysis, Retrieval and Delivery of Multimedia Contents, Nicola Adami, Andrea Cavallaro, Riccardo Leonardi, Pierangelo Migliorati (ed(s).), Springer, Berlin, 01.06.2012

Details BibTeX - Andreas Krutz, Alexander Glantz, Michael Tok, Thomas Sikora

Adaptive Global Motion Temporal Filtering

Proceedings of the 29th IEEE Picture Coding Symposium (PCS 2012), Kraków, Poland, 07.05.2012 - 09.05.2012

ISBN: 978-1-4577-2048-2

Details BibTeX - Michael Tok, Andreas Krutz, Alexander Glantz, Thomas Sikora

Lossy Parametric Motion Model Compression for Global Motion Temporal Filtering

Proceedings of the 29th IEEE Picture Coding Symposium (PCS 2012), Kraków, Poland, 07.05.2012 - 09.05.2012

ISBN: 978-1-4577-2048-2

Details BibTeX - Michael Tok, Alexander Glantz, Andreas Krutz, Thomas Sikora

Parametric Motion Vector Prediction for Hybrid Video Coding

Proceedings of the 29th IEEE Picture Coding Symposium (PCS 2012), Kraków, Poland, 07.05.2012 - 09.05.2012

ISBN: 978-1-4577-2048-2

Details BibTeX - Marko Esche, Alexander Glantz, Andreas Krutz, Michael Tok, Thomas Sikora

Weighted Temporal Long Trajectory Filtering for Video Compression

Proceedings of the 29th IEEE Picture Coding Symposium (PCS 2012), Kraków, Poland, 07.05.2012 - 09.05.2012

ISBN: 978-1-4577-2048-2

Details BibTeX - Marko Esche, Alexander Glantz, Andreas Krutz and Thomas Sikora

Adaptive Temporal Trajectory Filtering for Video Compression

IEEE Transactions on Circuits and Systems for Video Technology (TCSVT), IEEE, vol. 22, no. 5, May 2012, pp. 659-670

Details BibTeX - Michael Andersch

Lossy Parametric Motion Model Compression

09.02.2012, bachelor thesis tutored by Dipl.-Ing. Michael Tok, Dipl.-Ing. Alexander Glantz, Prof. Dr.-Ing. Thomas Sikora

Details BibTeX

2011

- Andreas Krutz, Alexander Glantz, Michael Frater, Thomas Sikora

Rate-Distortion Optimized Video Coding using Automatic Sprites

IEEE Journal of Selected Topics in Signal Processing, IEEE, vol. 5, no. 7, November 2011, pp. 1309 -1321

ISSN 1932-4553

Details BibTeX - Andreas Krutz, Alexander Glantz, Thomas Sikora

Theoretical Consideration of Global Motion Temporal Filtering

Proceedings of the 18th IEEE International Conference on Image Processing (IEEE ICIP2011), Brussels, Belgium, 11.09.2011 - 14.09.2011, pp. 3534-3537

IEEE catalog number: CFP11CIP-USB ISBN: 978-1-4577-1302-6

Details BibTeX - Marko Esche, Andreas Krutz, Alexander Glantz, Thomas Sikora

Temporal trajectory filtering for bi-directional predicted frames

Proceedings of the 18th IEEE International Conference on Image Processing (IEEE ICIP2011), Brussels, Belgium, 11.09.2011 - 14.09.2011, pp. 1669-1672

IEEE catalog number: CFP11CIP-USB ISBN: 978-1-4577-1302-6

Details BibTeX - Alexander Glantz, Michael Tok, Andreas Krutz, Thomas Sikora

A Block-adaptive Skip Mode for Inter Prediction based on Parametric Motion Models

Proceedings of the 18th IEEE International Conference on Image Processing (IEEE ICIP2011), Brussels, Belgium, 11.09.2011 - 14.09.2011, pp. 1225-1228

IEEE catalog number: CFP11CIP-USB ISBN: 978-1-4577-1302-6

Details BibTeX - Xu Han

Piece-wise Planar Fusion of Multi Camera Point Cloud Data for Depth Map Compression

25.08.2011, master thesis tutored by M. Sc. Engin Kurutepe, Prof. Dr.-Ing. Thomas Sikora

Details BibTeX - Haboub, Georges

Entwicklungen verteilter Bildcodierungsmethoden basierend auf LDPC

2011

Details BibTeX - Tao Liu

A new Prediction Mode using Short-term background Sprites for Hybrid Video Coding

30.05.2011, master thesis tutored by Dr.-Ing. Andreas Krutz, Prof. Dr.-Ing. Thomas Sikora

Details BibTeX - Michael Tok, Alexander Glantz, Andreas Krutz, Thomas Sikora

Feature-Based Global Motion Estimation Using the Helmholtz Principle

Proceedings of the IEEE International Conference on Acoustics Speech and Signal Processing (ICASSP 2011), Prague, Czech Republic, 22.05.2011 - 27.05.2011

ISSN: 1520-6149 E-ISBN: 978-1-4577-0537-3 Print ISBN: 978-1-4577-0538-0

Details BibTeX - Pascal Kelm, Sebastian Schmiedeke, Thomas Sikora

Multi-modal, Multi-resource Methods for Placing Flickr Videos on the Map

ACM International Conference on Multimedia Retrieval (ICMR), 17.04.2011 - 20.04.2011, pp. 8

Details BibTeX

2010

- Alexander Glantz, Andreas Krutz, Thomas Sikora

Adaptive Global Motion Temporal Prediction for Video Coding

Proceedings of the 28th IEEE Picture Coding Symposium (PCS 2010), Nagoya, Japan, 07.12.2010 - 10.12.2010

ISBN: 978-1-4244-7135-5

Details BibTeX - Andreas Krutz, Alexander Glantz, Thomas Sikora

Recent Advances in Video Coding using Static Background Models

Proceedings of the 28th IEEE Picture Coding Symposium (PCS 2010), Nagoya, Japan, 07.12.2010 - 10.12.2010

ISBN: 978-1-4244-7135-5

Details BibTeX - Marko Esche, Andreas Krutz, Alexander Glantz, Thomas Sikora

A Novel In-loop Filter for Video-Compression based on Temporal Pixel Trajectories

Proceedings of the 28th IEEE Picture Coding Symposium (PCS 2010), Nagoya, Japan, 07.12.2010 - 10.12.2010

ISBN: 978-1-4244-7135-5

Details BibTeX - Jong-Seok Lee, Francesca De Simone, Naeem Ramzan, Zhijie Zhao, Engin Kurutepe, Thomas Sikora, Jörn Ostermann, Ebroul Izquierdo, Touradj Ebrahimi

Subjective Evaluation of Scalable Video Coding for Content Distribution

ACM Multimedia 2010, 25.10.2010 - 29.10.2010

Details BibTeX - Martin Haller, Andreas Krutz, Thomas Sikora

Robust Global Motion Estimation using Motion Vectors of Variable Size Blocks and Automatic Motion Model Selection

Proceedings of the 17th IEEE International Conference on Image Processing (ICIP 2010), Hong Kong, 26.09.2010 - 29.09.2010

ISBN: 978-1-4244-7993-1 ISSN: 1522-4880

Details BibTeX - Alexander Glantz, Andreas Krutz, Thomas Sikora

Global Motion Temporal Filtering for In-loop Deblocking

Proceedings of the 17th IEEE International Conference on Image Processing (ICIP 2010), Hong Kong, 26.09.2010 - 29.09.2010

ISBN: 978-1-4244-7993-1 ISSN: 1522-4880

Details BibTeX - Michael Tok, Alexander Glantz, Marina Georgia Arvanitidou, Andreas Krutz, Thomas Sikora

Compressed Domain Global Motion Estimation using the Helmholtz Tradeoff Estimator

Proceedings of the 17th IEEE International Conference on Image Processing (ICIP 2010), Hong Kong, 26.09.2010 - 29.09.2010

ISBN: 978-1-4244-7993-1 ISSN: 1522-4880

Details BibTeX - Alexander Glantz, Andreas Krutz, Thomas Sikora, Paulo Nunes, Fernando Pereira

Automatic MPEG-4 Sprite Coding - Comparison of Integrated Object Segmentation Algorithms

Multimedia Tools and Applications, Special Issue on "Advances in Image and Video Processing Techniques", Springer Netherlands, vol. 49, no. 3, September 2010, pp. 483-512

ISSN: 1380-7501 (Print) ISSN: 1573-7721 (Online)

Details BibTeX - Rüdiger Knörig

Multiple Description Coding mittels kaskadierter korrelierender Transformationen

2010

Details BibTeX - Andreas Krutz, Alexander Glantz, Thomas Sikora

Background Modeling for Video Coding: From Sprites to Global Motion Temporal Filtering

Proceedings of the IEEE International Symposium on Circuits and Systems (ISCAS 2010), volume June 2010, Paris, France, 30.05.2010 - 02.06.2010, pp. 2179-2182

ISBN: 978-1-4244-5309-2

Details BibTeX - Stephan Rein

Low Complexity Text and Image Compression for Wireless Devices and Sensors

2010

Details BibTeX - Dipl.-Ing. Andreas Krutz

From Sprites to Global Motion Temporal Filtering

2010

Details BibTeX

2009

2009

- Tilman Liebchen

MPEG-4 ALS The Standard for Lossless Audio Coding (invited)

Journal of the the Acoustical Society of Korea, vol. 28, no. 7, October 2009, pp. 618-629

Details BibTeX - Maxime Lardeur, Slim Essid, Gael Richard, Martin Haller, Thomas Sikora

Incorporating prior knowledge on the digital media creation process into audio classifiers

Proceedings of the IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP 2009), Taipei, Taiwan, 19.04.2009 - 24.04.2009, pp. 1653-1656

ISBN 978-1-4244-2354-5

Details BibTeX

2008

- Lutz Goldmann, Antonio Rama, Thomas Sikora, Francesc Tarres

On the Detection and Localization of Facial Occlusions and its Use within Different Scenarios

IEEE 10th International Workshop on Multimedia Signal Processing, MMSP 8-10 October 2008, Proceedings, Cairns, Australia, volume October 2008, Australia, 08.10.2008 - 10.10.2008, pp. 592-597

ISBN 978-1-4244-2295-1

Details BibTeX - Andreas Krutz, Sebastian Knorr, Matthias Kunter, Thomas Sikora

Camera Motion-Constraint Video Codec Selection (invited)

IEEE 10th International Workshop on Multimedia Signal Processing, MMSP 8-10 October 2008, Proceedings, Cairns, Australia, volume October 2008, Cairns, Queensland, Australia, 08.10.2008 - 10.10.2008, pp. 58 - 63

Special Session on Global Motion Estimation and Mosaicing for Applications in Video Analysis and Coding ; ISBN 978-1-4244-2295-1

Details BibTeX - Sebastian Knorr, Matthias Kunter, Thomas Sikora

Stereoscopic 3D from 2D Video with Super-Resolution Capability

Signal Processing: Image Communication, Amsterdam: Elsevier Science B.V., vol. Vol. 23, no. 9, October 2008, pp. 665-676

http://dx.doi.org/10.1016/j.image.2008.07.004 ; ISSN: 0923-5965

Details BibTeX - Juan Jose Burred

From Sparse Models to Timbre Learning: New Methods for Musical Source Separation

2008

Details BibTeX

2007

- Zouhair M. Belkoura

Analysis and Application of Turbo Coder based Distributed Video Coding

2007

Details BibTeX - Thomas Sikora

Where Computer Vision meets Video Compression (invited lecture)

New AI Seminar, School of Computer Science and Engineering, University of New South Wales, Australia, 23.03.2007

Details BibTeX

2006

- Y. Yuan, B. Cockburn, Thomas Sikora, Mrinal Mandal

A GoP Based FEC Technique for Packet Based Video Streaming

10th WSEAS International Conference on Communications, 10.07.2006 - 15.07.2006, pp. 187-192

Details BibTeX - Christoph Fehn

Depth-Image-Based Rendering (DIBR), Compression, and Transmission for a Flexible Approach on 3DTV

2006

Details BibTeX - Elisa Gelasca Drelie, Mustafa Karaman, Tourajd Ebrahimi, Thomas Sikora

A Framework for Evaluating Video Object Segmentation Algorithms

CVPR 2006 Workshop (Perceptual Organization in Computer Vision POCV), New York, 17.06.2006 - 22.06.2006, pp. 198-198

Details BibTeX

2005

- Tilman Liebchen, T. Moriya, N. Harada, Y. Kamamoto, Y. Reznik

The MPEG-4 Audio Lossless Coding (ALS) Standard - Technology and Applications

119th AES Convention, New York, 07.10.2005 - 10.10.2005

T. Moriya, N. Harada, Y. Kamamoto: NTT Communication Science Labs; Y. Reznik: RealNetworks Inc.

Details BibTeX - 3D Videocommunication: Algorithms, concepts and real-time systems in human centred communication

Oliver Schreer, Peter Kauff, Thomas Sikora (ed(s).), John Wiley & Sons, July 2005, 364 pages

ISBN: 978-0-470-02271-9

Details BibTeX - Tilman Liebchen, Y. Reznik

Improved Forward-Adaptive Prediction for MPEG-4 Audio Lossless Coding

118th AES Convention, Barcelona, 28.05.2005 - 31.05.2005

Y. Reznik: RealNetworks Inc.

Details BibTeX - Thomas Sikora

Trends and Perspectives in Image and Video Coding (invited)

Proceedings of the IEEE, IEEE, vol. 93, no. 1, January 2005, pp. 6-17

Details BibTeX - Peter Noll, Tilman Liebchen

Lossless and Perceptual Coding of Digital Audio

in Beiträge zur Geschichte und neueren Entwicklung der Sprachakustik und Informationsverarbeitung - Werner Endres zum 90. Geburtstag, w.e.b. Universitätsverlag, Dresden, 2005

Details BibTeX

2004

- L. Onural, Thomas Sikora, Aljoscha Smolic

"An Overview of a New European Consortium: Integrated Three-Dimensional Television - Capture, Transmission and Display (3DTV)"

European Workshop on the Integration of Knowledge, Semantics and Digital Media Technology (EWIMT´04), Proceedings, London, November 2004

Details BibTeX - Sebastian Knorr, Carsten Clemens, Matthias Kunter, Thomas Sikora

Robust Concealment for Erroneous Block Bursts in Stereoscopic Images

2nd International Symposium on 3D Data Processing, Visualization, and Transmission (3DPVT'04), Thessaloniki, Greece, 06.09.2004 - 09.09.2004

Details BibTeX - Tilman Liebchen, Y. Reznik, T. Moriya, D. Yang

MPEG-4 Audio Lossless Coding

116th AES Convention, Berlin, Germany, 08.05.2004 - 11.05.2004

Y. Reznik: RealNetworks Inc.; T. Moriya: NTT Cyber Space Labs; D. Yang: University of Southern California

Details BibTeX - Tilman Liebchen

An Introduction to MPEG-4 Audio Lossless Coding

IEEE ICASSP 2004, Montreal, Canada, May 2004

Details BibTeX - T. Moriya, D. Yang, Tilman Liebchen

Extended Linear Prediction Tools for Lossless Audio Coding

IEEE ICASSP 2004, Montreal, Canada, May 2004

T. Moriya: NTT Cyber Space Labs; D. Yang: University of Southern California

Details BibTeX - D. Yang, T. Moriya, Tilman Liebchen

A Lossless Audio Compression Scheme with Random Access Property

IEEE ICASSP 2004, Montreal, Canada, May 2004

D. Yang: University of Southern California; T. Moriya: NTT Cyber Space Labs

Details BibTeX - T. Moriya, D. Yang, Tilman Liebchen

A Design of Lossless Compression for High Quality Audio Signals

18th International Congress on Acoustics, Kyoto, April 2004

T. Moriya: NTT Cyber Space Labs; D. Yang: University of Southern California

Details BibTeX

2003

- Tilman Liebchen

MPEG-4 Lossless Coding for High-Definition Audio

115th AES Convention, New York, October 2003

Details BibTeX - Stephan Rein

Performance Measurements of Voice Quality over Error-Prone Wireless Networks using Robust Header Compression

01.02.2003, student research project tutored by Batke, Fitzek, Prof. Sikora

Details BibTeX

2002

- Introduction to MPEG-7: Multimedia Content Description Interface

B. S. Manjunath, Philippe Salembier, Thomas Sikora (ed(s).), John Wiley & Sons, April 2002, 396 pages

ISBN: 978-0-471-48678-7

Details BibTeX - Tilman Liebchen

Lossless Audio Coding Using Adaptive Multichannel Prediction

113th AES Convention, Los Angeles, 2002

Details BibTeX

2000

- Peter Noll

Speech and Audio Coding for Multimedia Communications (invited)

Proceedings International Cost 254 Workshop on Intelligent Communication Technologies and Applications, Neuchâtel, Schweiz, 2000, pp. 253-263

Details BibTeX

1999

- Peter Noll, Tilman Liebchen

Digital Audio: From Lossless to Transparent Coding (invited)

Proceedings IEEE Signal Processing Workshop, Poznan, 1999, pp. 53-60

Details BibTeX

1997

1996

1995

- Peter Noll

Digital Audio Coding for Visual Communications (invited)

Proceedings of the IEEE, vol. 83, no. 6, June 1995, pp. 925-943

Details BibTeX

1990

- Peter Noll

Data Compression Techniques (invited)

1st Working Conference on Common Standards for Quantitative Electrocardiography, "Digital ECG Data: Communication, Encoding and Storage", Leuven (Belgien), 1990, pp. 39 - 57

Details BibTeX - Peter Noll

Data Compression Techniques for New Standards in Speech and Image Coding

VI. Internationales Weiterbildungsprogramm Berlin '90, TU Berlin, Zentrum für Technologische Zusammenarbeit, 1990, pp. 245 - 264

Details BibTeX